For 100% free, unlimited usage, use a Parakeet transcription model and Apple Intelligence for AI processing. This guide shows how to keep transcription on your device and avoid usage-based API costs. Parakeet handles voice-to-text locally, while Apple Intelligence handles simple cleanup, short summaries, and lightweight writing help.Documentation Index

Fetch the complete documentation index at: https://utter.to/docs/llms.txt

Use this file to discover all available pages before exploring further.

Overview

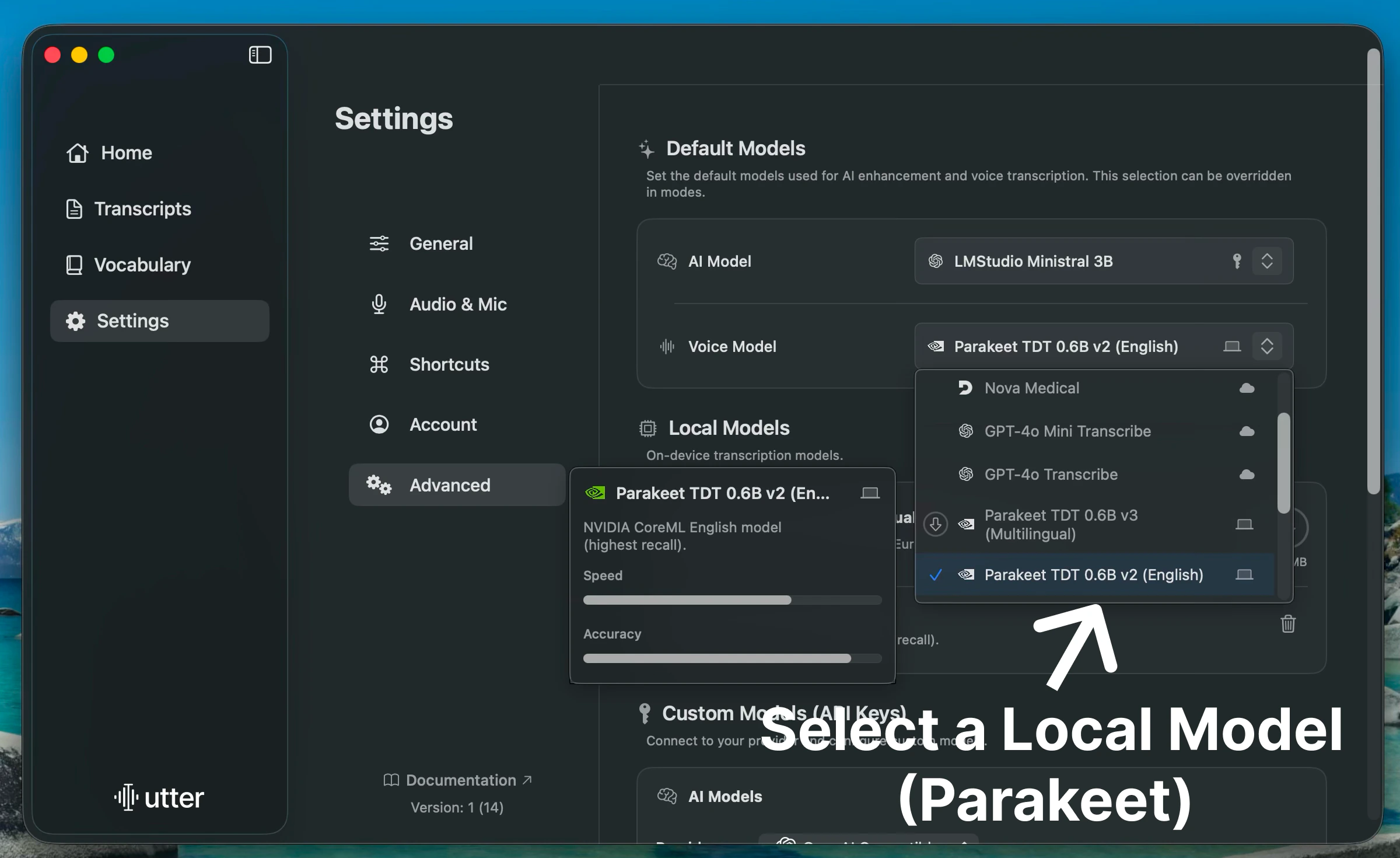

Use Utter locally in two stages to avoid paid transcription or AI usage:- Local transcription: Choose an on-device speech model (Parakeet) for voice-to-text.

- Free AI processing: Choose Apple Intelligence as your default AI model.

Stage 1: Use Local Transcription Models

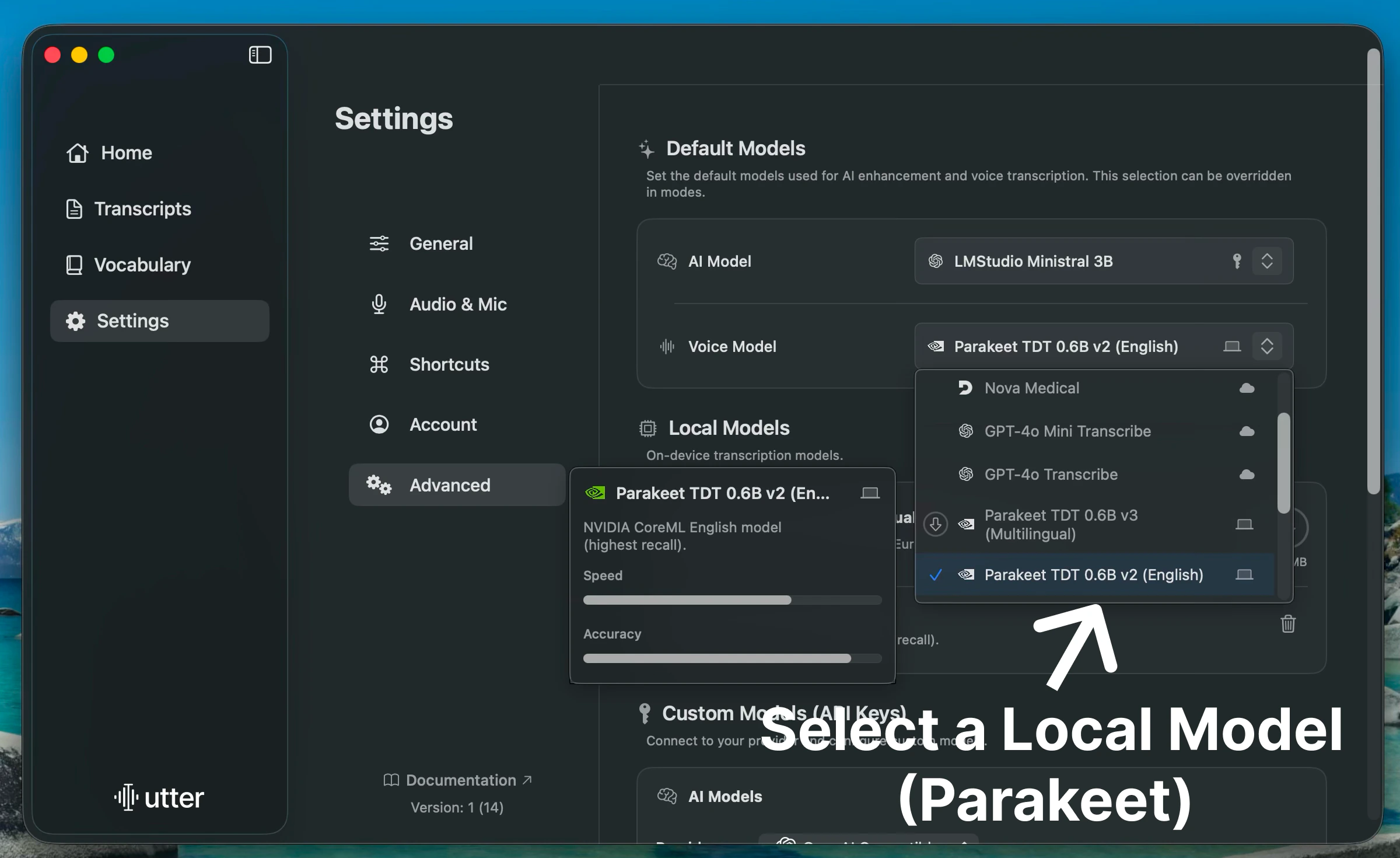

To make transcription fully local, switch the default transcription model to a Parakeet model.Change the Default Transcription Model

At the top of Default Models, select a Parakeet model for Transcription. Built-in models are marked with a MacBook icon in the model list.

When a Parakeet model is selected, transcription stays on-device, requires no internet, and remains private.

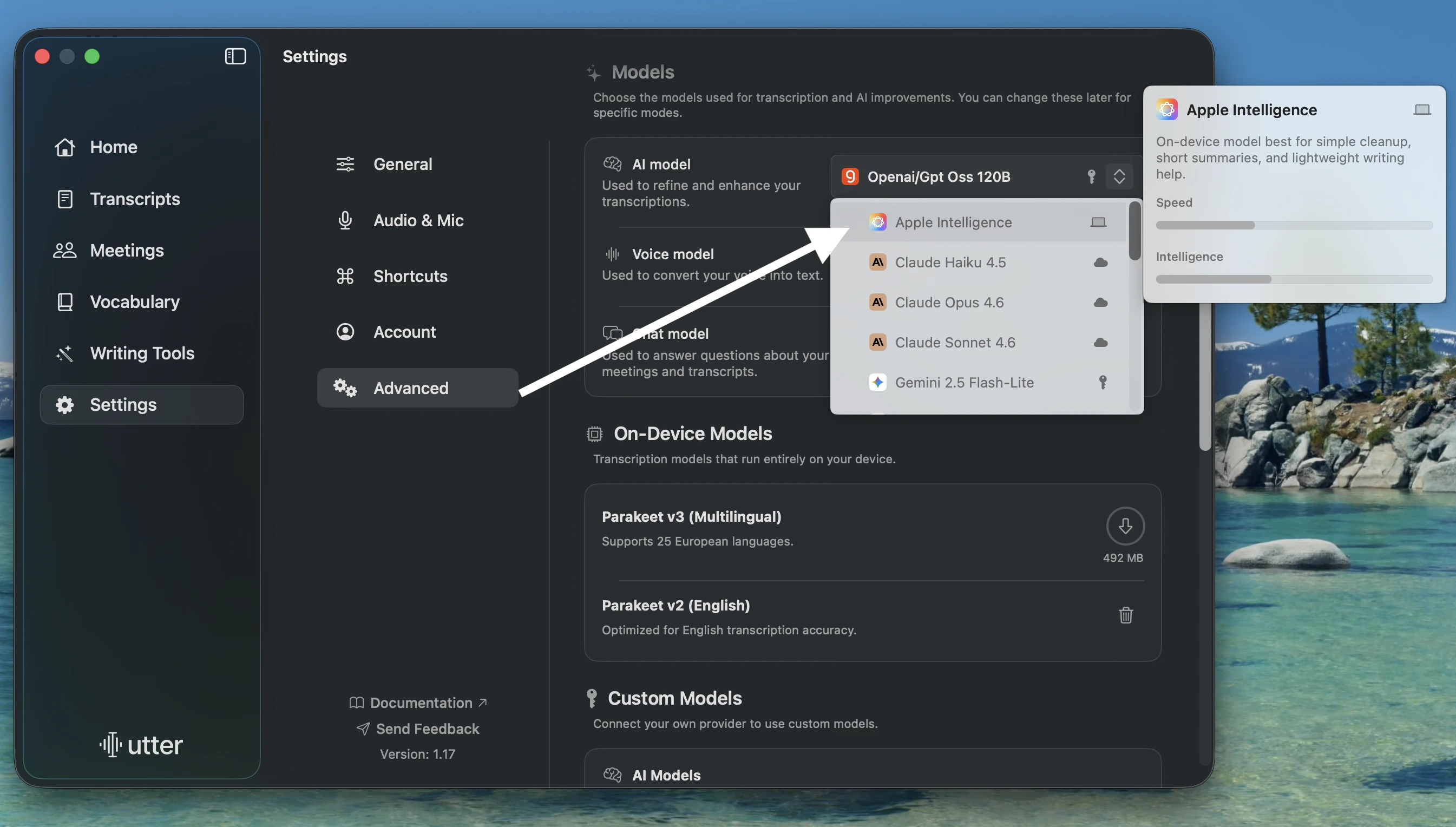

Stage 2: Use Apple Intelligence for AI Processing

Apple Intelligence is the recommended local AI model for most users. It works well for simple cleanup, short summaries, and lightweight writing help.Apple Intelligence requires a Mac with Apple silicon, macOS Sequoia 15.1 or later, supported device and Siri languages, and about 7 GB of available storage. Turn it on in System Settings > Apple Intelligence & Siri before selecting it in Utter.

What to expect: New AI improvements use Apple Intelligence, so no paid AI API key is required. Apple Intelligence runs many requests on-device and can use Private Cloud Compute for more complex requests.

If that did not work: Check that Apple Intelligence is turned on in System Settings > Apple Intelligence & Siri, confirm your Mac and language are supported, then restart Utter and select Apple Intelligence again.

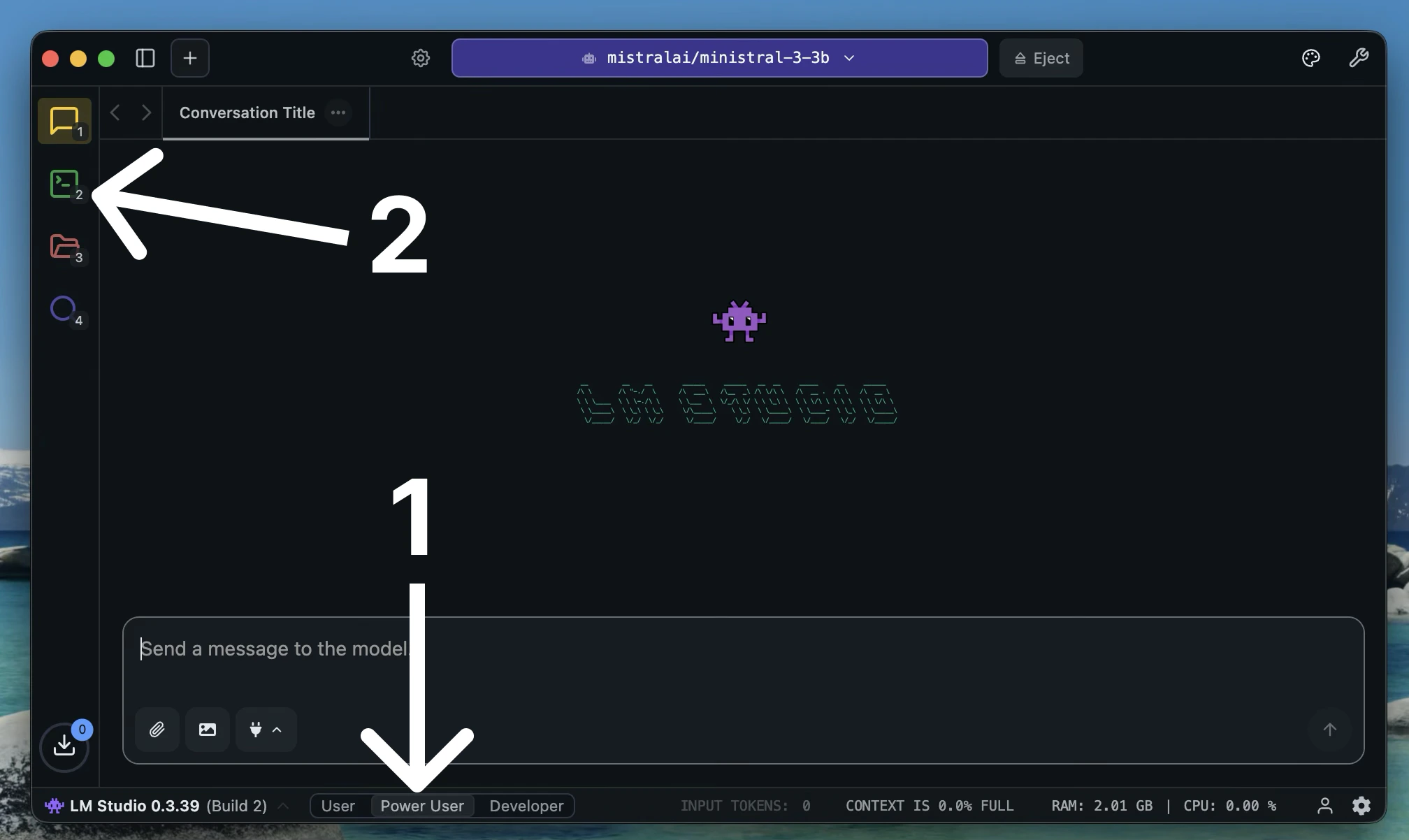

Advanced Fallback: Use LM Studio or Ollama

Use this path only if Apple Intelligence is not available on your Mac, or if you already run a local OpenAI-compatible server.LM Studio

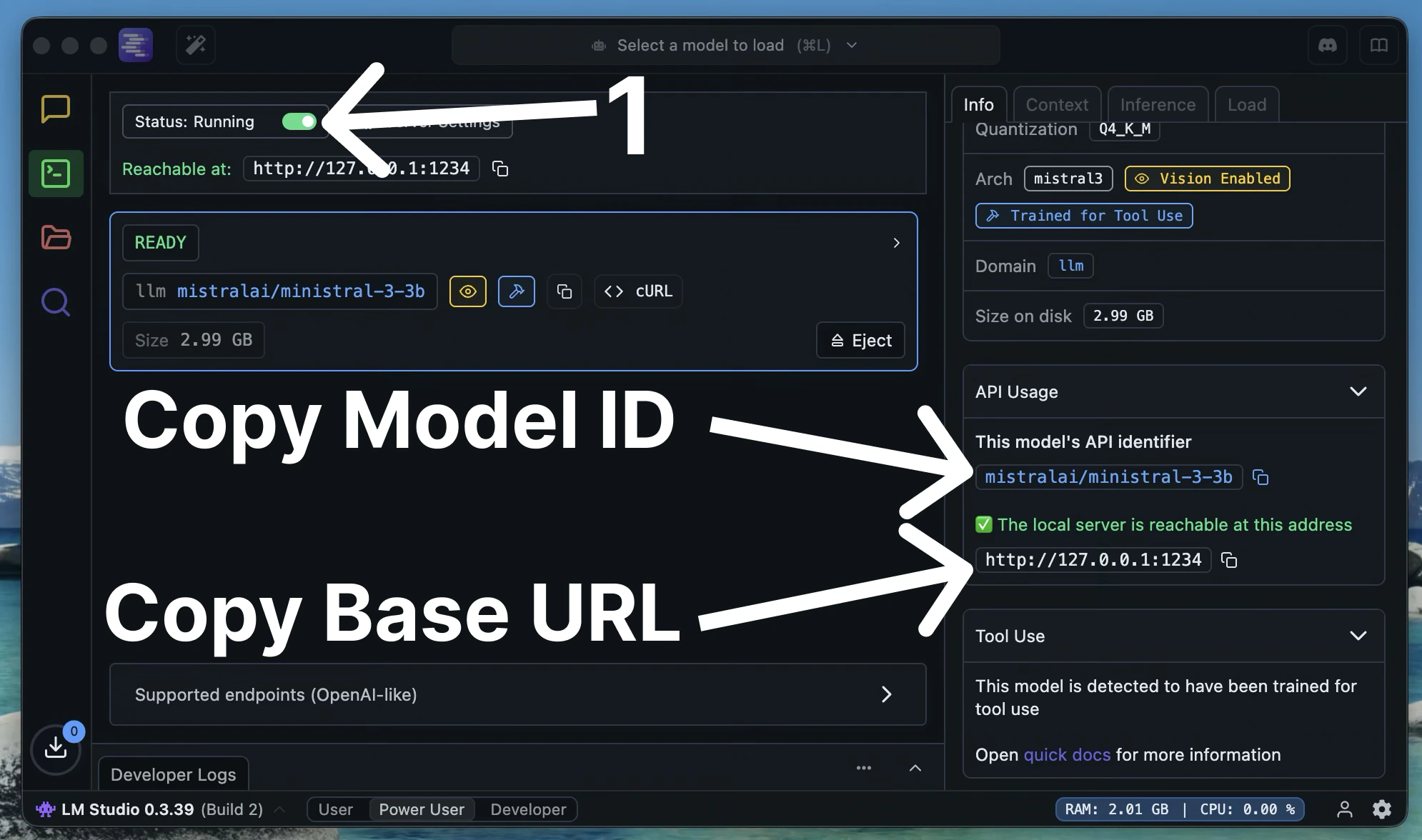

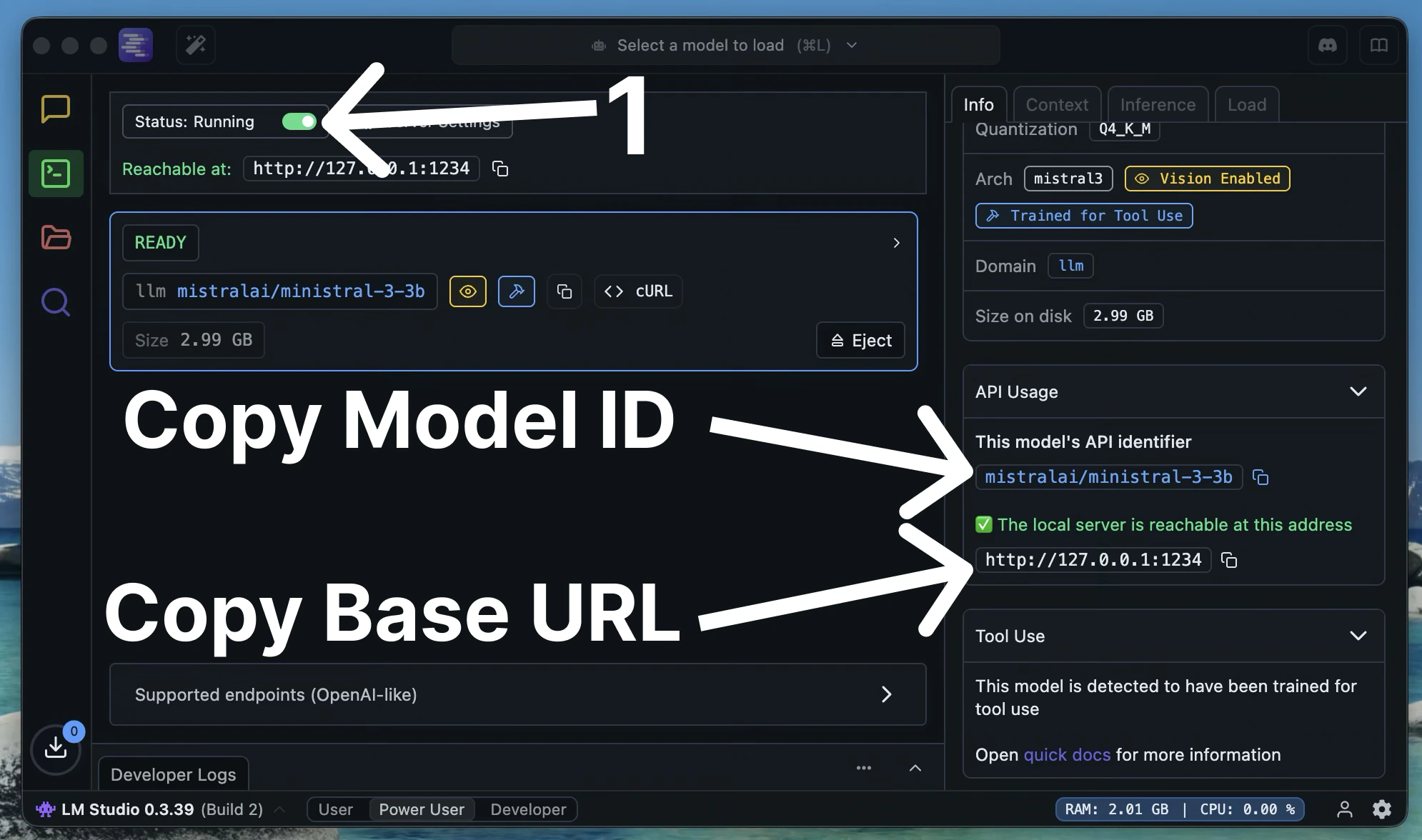

Load a Model and Start the Server

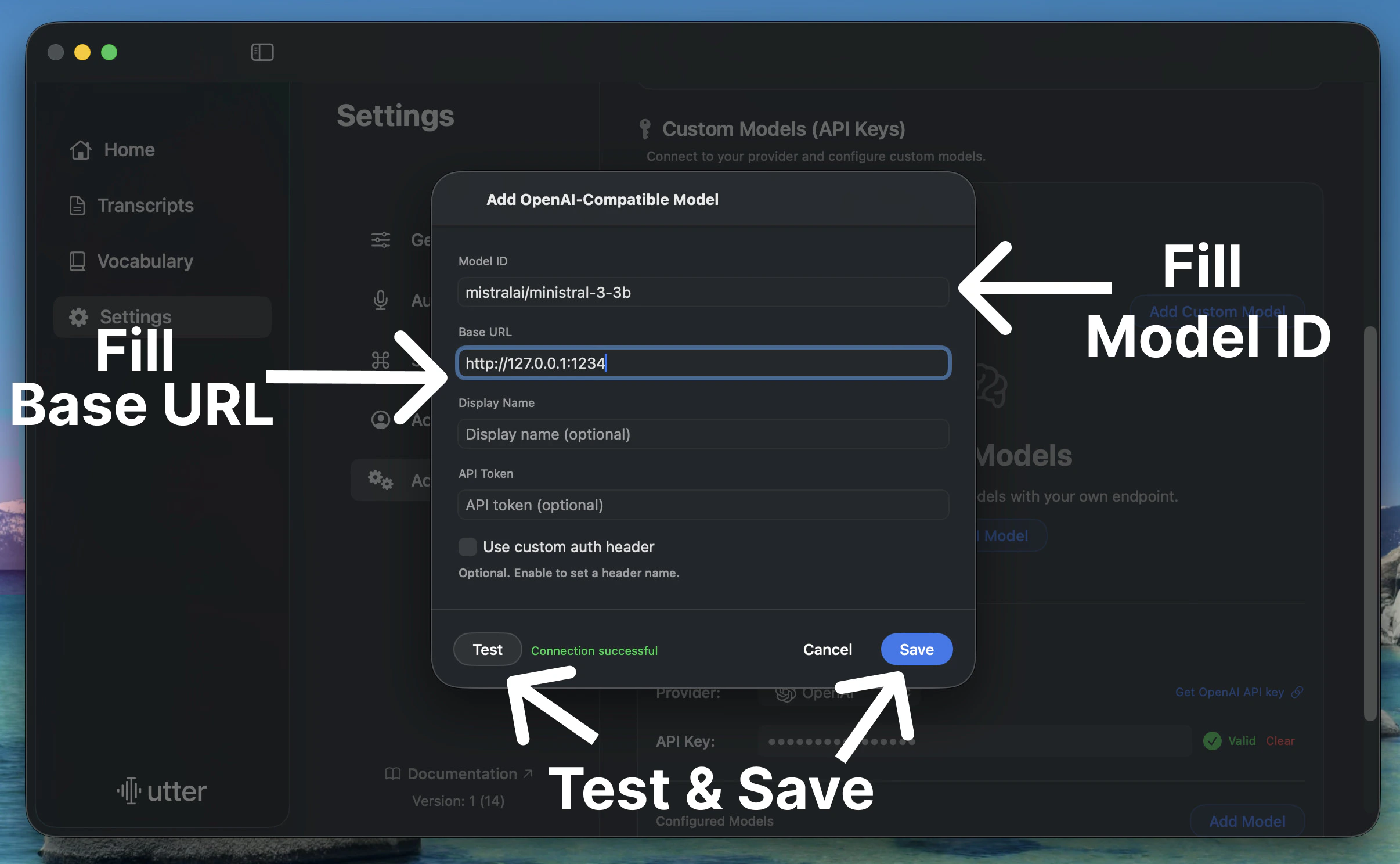

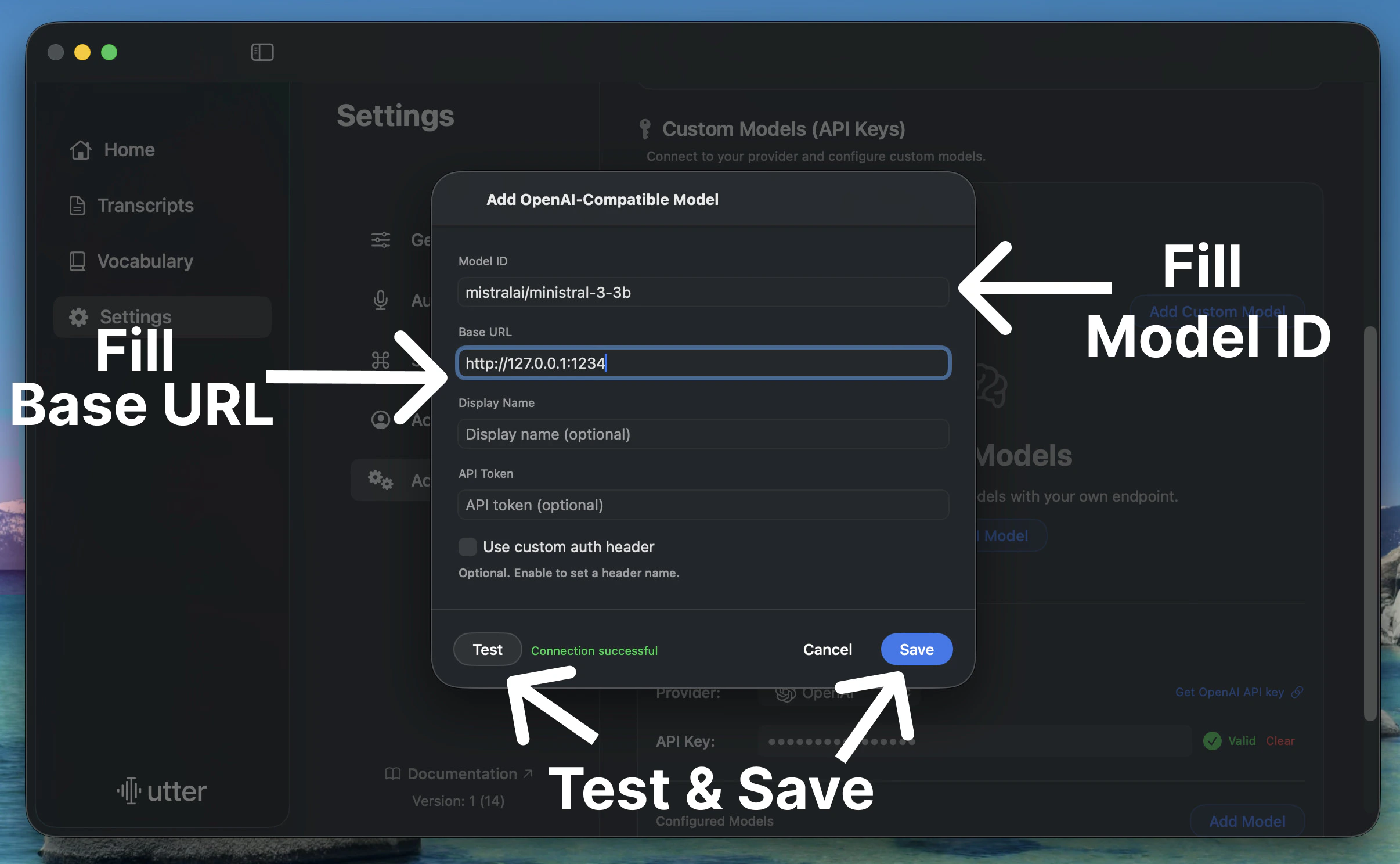

Make sure a model is loaded, enable the server, then copy the Model ID and Base URL from the Developer tab.

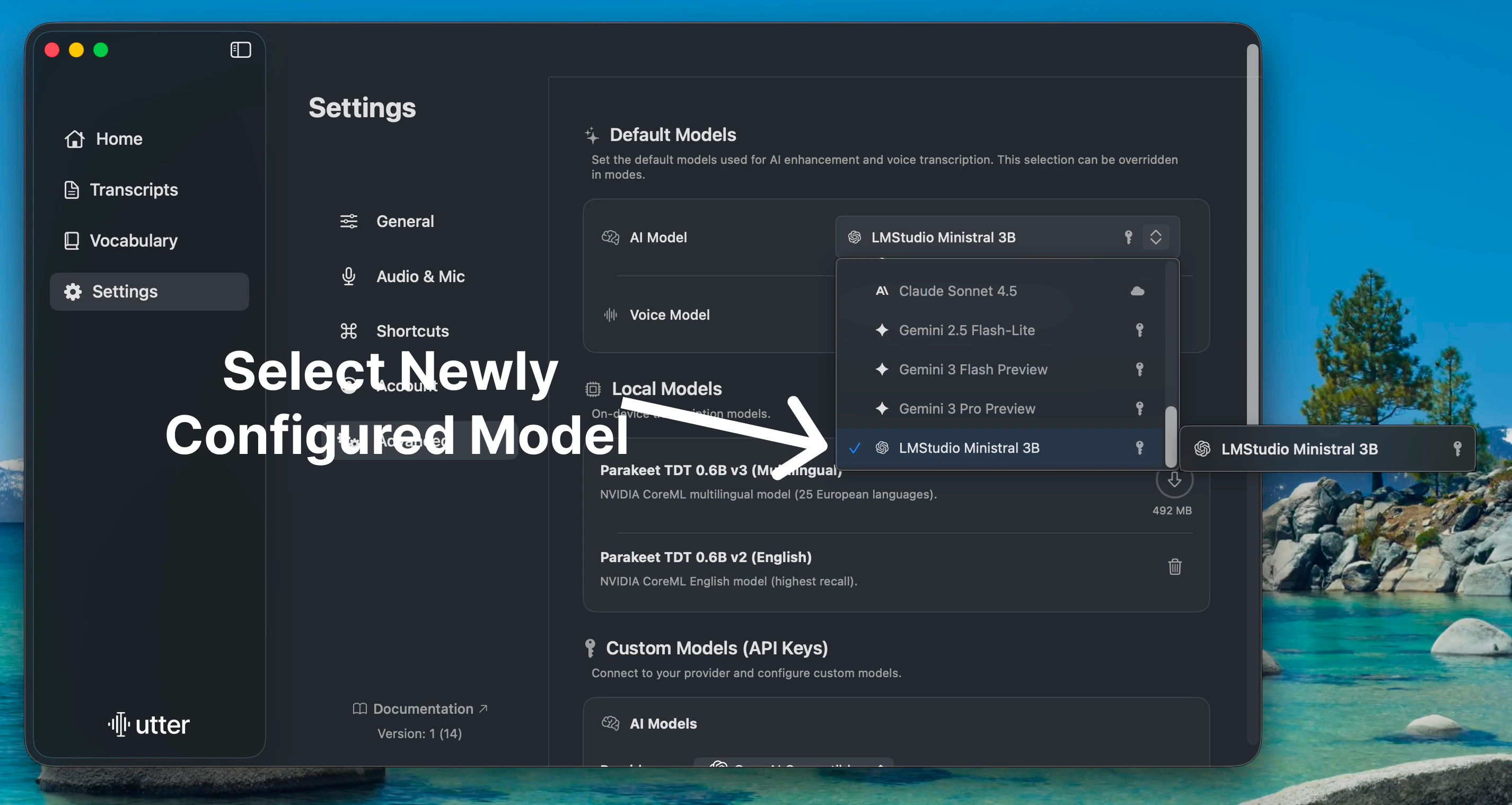

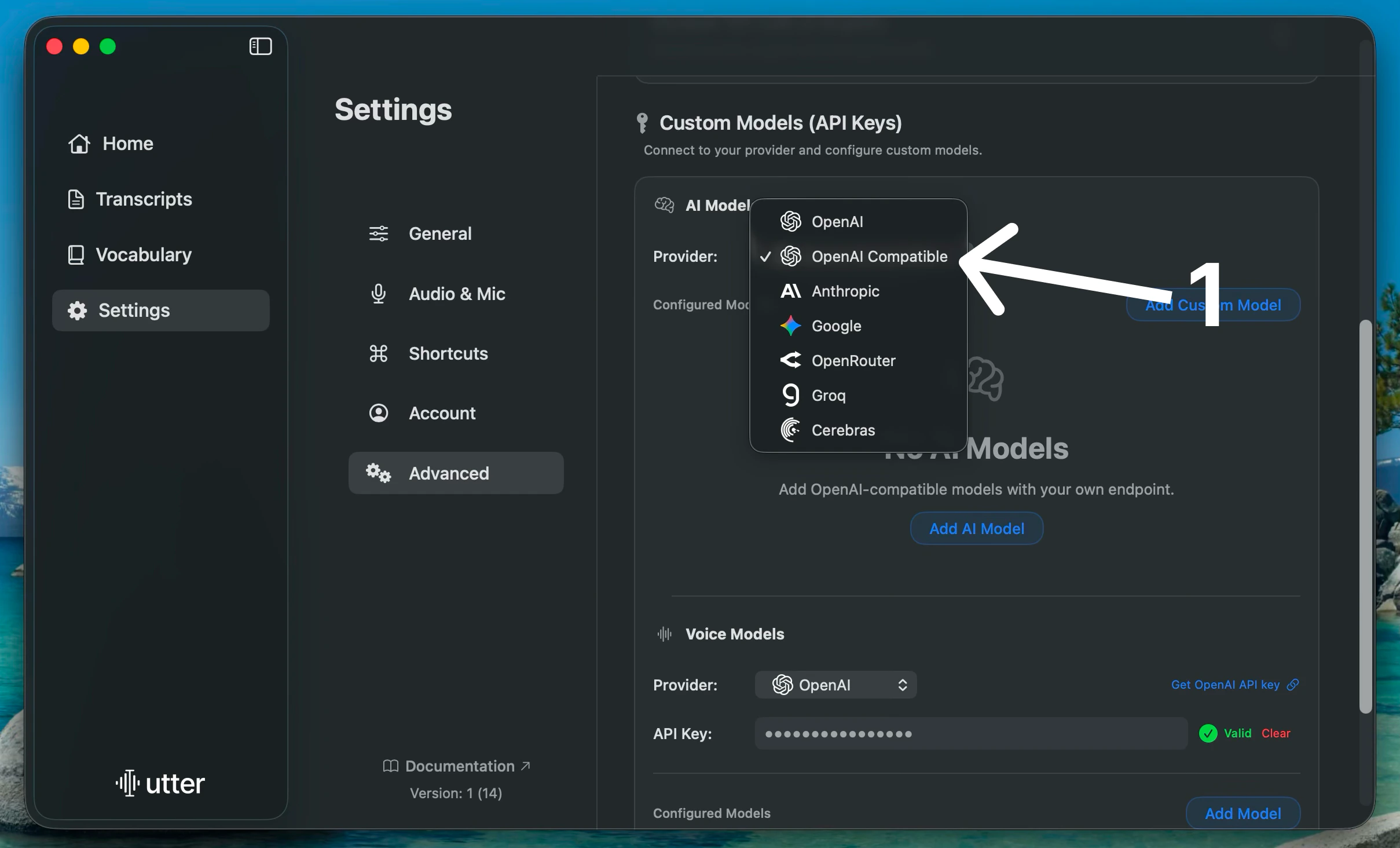

Open Utter Custom Models

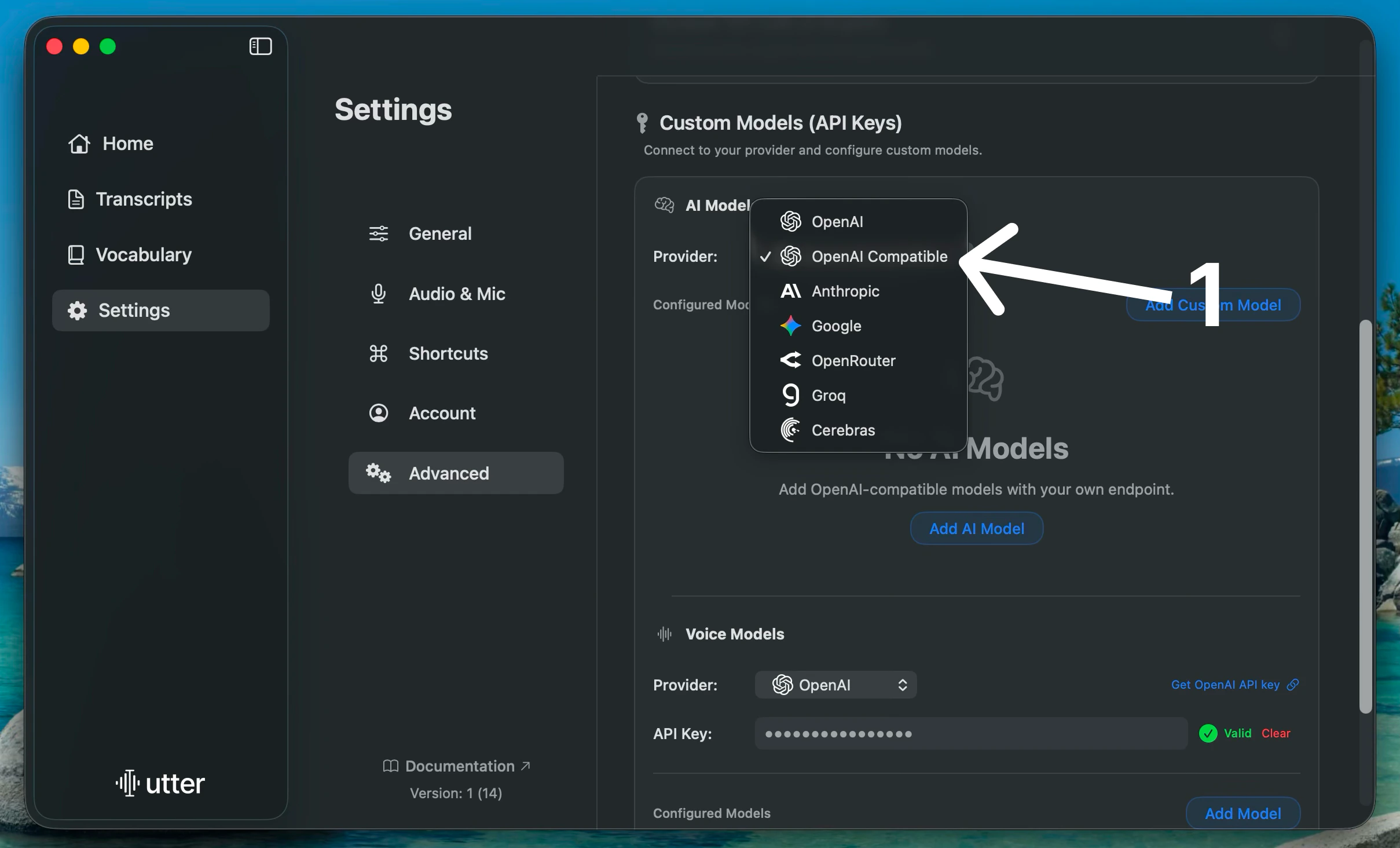

In Utter, go to Settings > Advanced > Custom Models, then choose Custom OpenAI Compatible as the provider.

Add the Local Model

Click Add Custom Model. Paste the Model ID and Base URL, then click Test to verify the connection and Save.

Ollama

If you already use Ollama, configure it as an OpenAI-compatible provider and follow the same Custom Models steps above. The key requirement is a local server URL and a model ID exposed by your Ollama setup.Related

Bring Your Own Keys

Connect your own model providers and API keys.

Use Utter for Free

Set up free AI models for Utter.